What Are the Main Challenges of AI Agents? Problems and Solutions Explained

AI agents are no longer limited to simple task execution. They are increasingly used to manage workflows, interact with tools, retrieve information, and make decisions across systems. As organizations rely more on these systems, a clear picture is emerging: while AI agents offer strong capabilities, they also introduce practical challenges that must be understood and addressed.

This article explains the Challenges of AI Agents by breaking them down into real operational problems and the solutions commonly used to manage them. The goal is not to promote a specific approach, but to help readers understand how AI agents behave in real-world environments and what it takes to deploy them responsibly.

Why AI Agent Challenges Matter More Now

As AI agents move from controlled experiments into production systems, their limitations become more visible. Unlike traditional AI models that respond to single inputs, agents operate over longer periods, interact with external tools, and often work with incomplete information. This makes their behavior harder to predict and manage.

With growing interest in Autonomous AI systems in 2026, understanding these challenges is critical for teams designing, buying, or managing agent-based systems. Ignoring them can lead to unreliable outputs, higher costs, and increased operational risk.

Do you know? AI Agents Are Disrupting Traditional Business Processes

Challenge 1: Context Loss in Long Tasks

Context loss is one of the most common and impactful issues in AI agent systems. It becomes especially visible when agents are expected to handle long conversations, multi-step workflows, or tasks that unfold over extended periods. While modern AI agents can process large amounts of information, they still operate under technical constraints that affect how much context they can retain and use effectively.

Understanding why context loss happens, what problems it creates, and how it is addressed is essential for anyone designing or using AI agents in real-world systems.

What Goes Wrong

AI agents often lose track of earlier inputs, decisions, or constraints as a task progresses. This usually happens during long conversations, extended research tasks, or workflows that require many intermediate steps.

At a basic level, most AI agents rely on language models that process information within a limited context window. Once that window is exceeded, earlier details may be partially or completely dropped. As a result, the agent may no longer “remember” instructions, assumptions, or conclusions that were important earlier in the task.

At a more advanced level, context loss can also occur when agents switch between tools, handle parallel subtasks, or operate across multiple sessions. Each transition increases the risk that important information is not carried forward correctly.

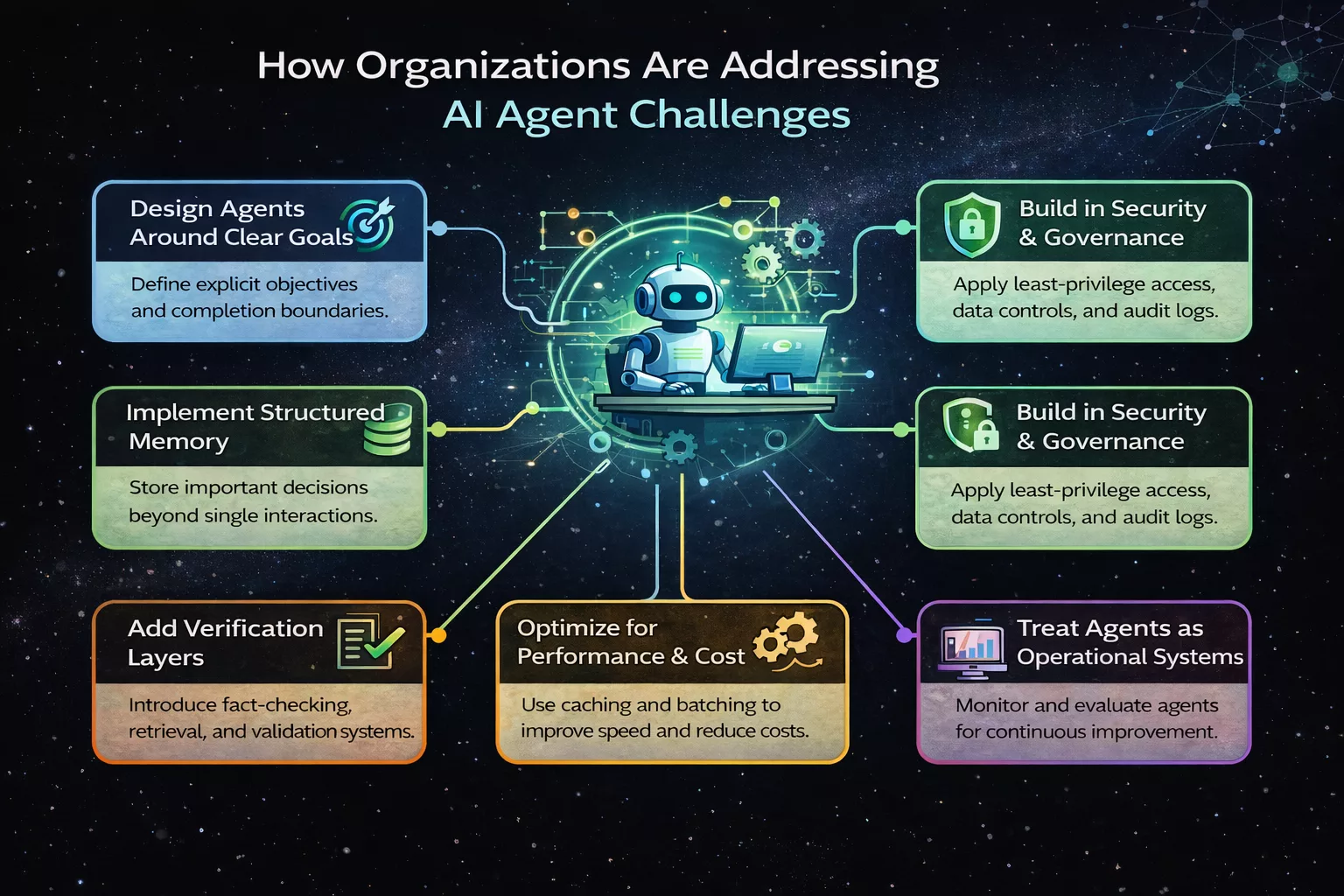

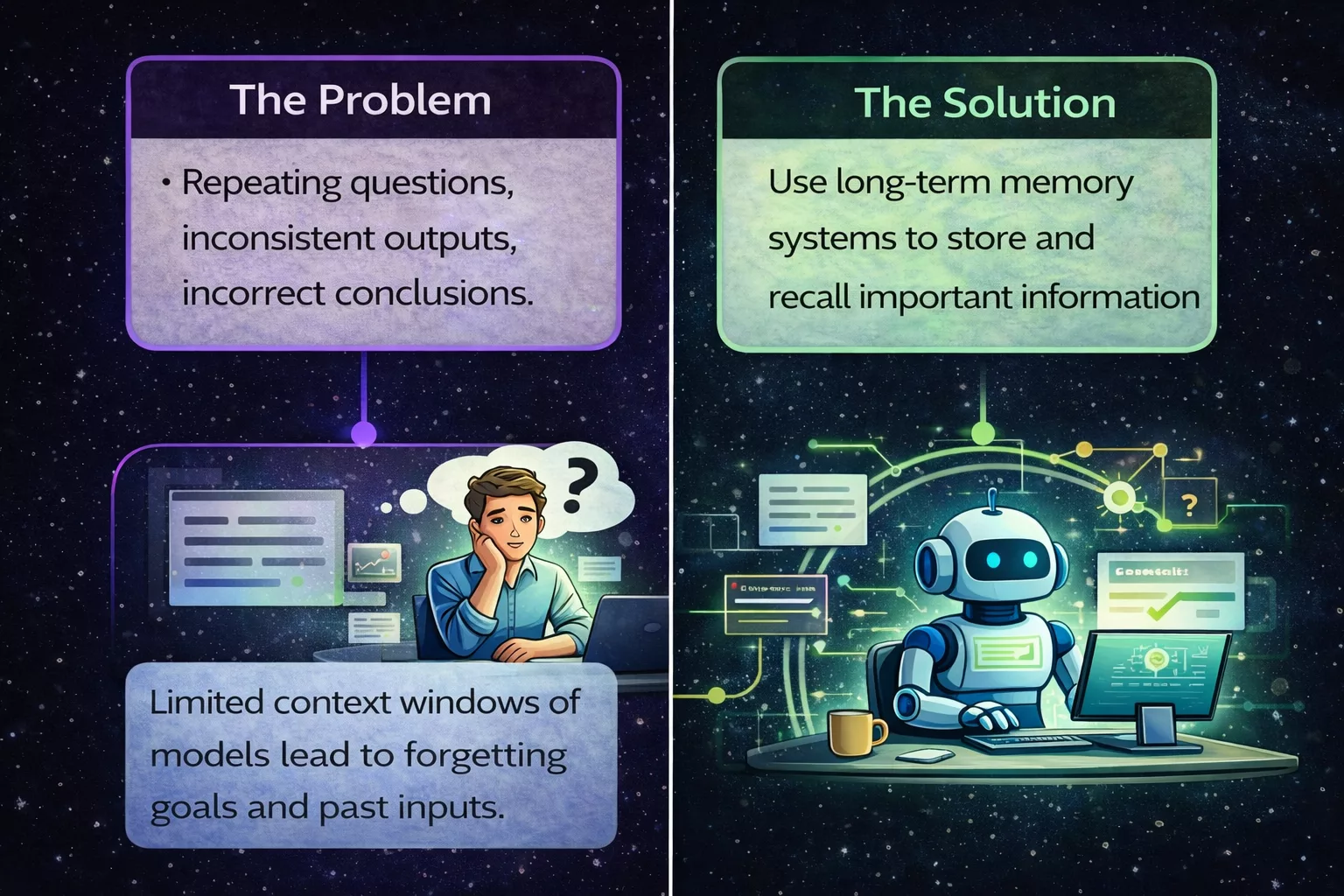

The Problem

When context is lost, the quality and reliability of the agent’s behavior decline. The agent may ask questions that were already answered, contradict previous decisions, or generate outputs that no longer align with the original goal.

In operational environments, this creates several risks. Workflows may become inefficient as tasks are repeated. Outputs may become inconsistent, making it harder for humans to trust the system. In customer-facing or decision-support scenarios, context loss can lead to confusion or incorrect outcomes.

From a systems perspective, context loss also makes debugging difficult. It becomes harder to determine whether an error is caused by model limitations, memory handling, or workflow design. Over time, this reduces confidence in deploying agents for complex or long-running tasks.

We have listed! Agentic AI for Customer Support Automation

The Solution

The primary way to address context loss is to move beyond relying solely on the model’s internal context window. Instead, modern agent systems use external memory and structured context management.

At a basic level, this involves storing key information such as goals, constraints, and decisions in an external memory store. When the agent needs to act, it retrieves only the most relevant pieces of information rather than the entire conversation history.

At a more advanced level, systems use vector databases, long-term memory modules, or hierarchical memory structures. These allow the agent to recall past information based on relevance rather than recency. Some systems also summarize past interactions into compact representations that preserve meaning while reducing size.

In well-designed agent architectures, context management is treated as a core system function. Clear rules define what information must be remembered, when it should be updated, and how it should influence future decisions. This approach allows agents to operate reliably over long tasks without overwhelming the underlying model.

Context loss is not a minor inconvenience. It directly affects whether AI agents can be trusted to handle complex, real-world responsibilities. As agents are increasingly used for planning, monitoring, and decision-making, maintaining context becomes a requirement rather than an optimization.

By addressing context loss through proper memory design and workflow structure, organizations can significantly improve agent reliability, reduce errors, and enable agents to operate effectively over extended periods.

Read once! Why Partnering with Top Agentic AI Companies Can Accelerate Innovation and Business

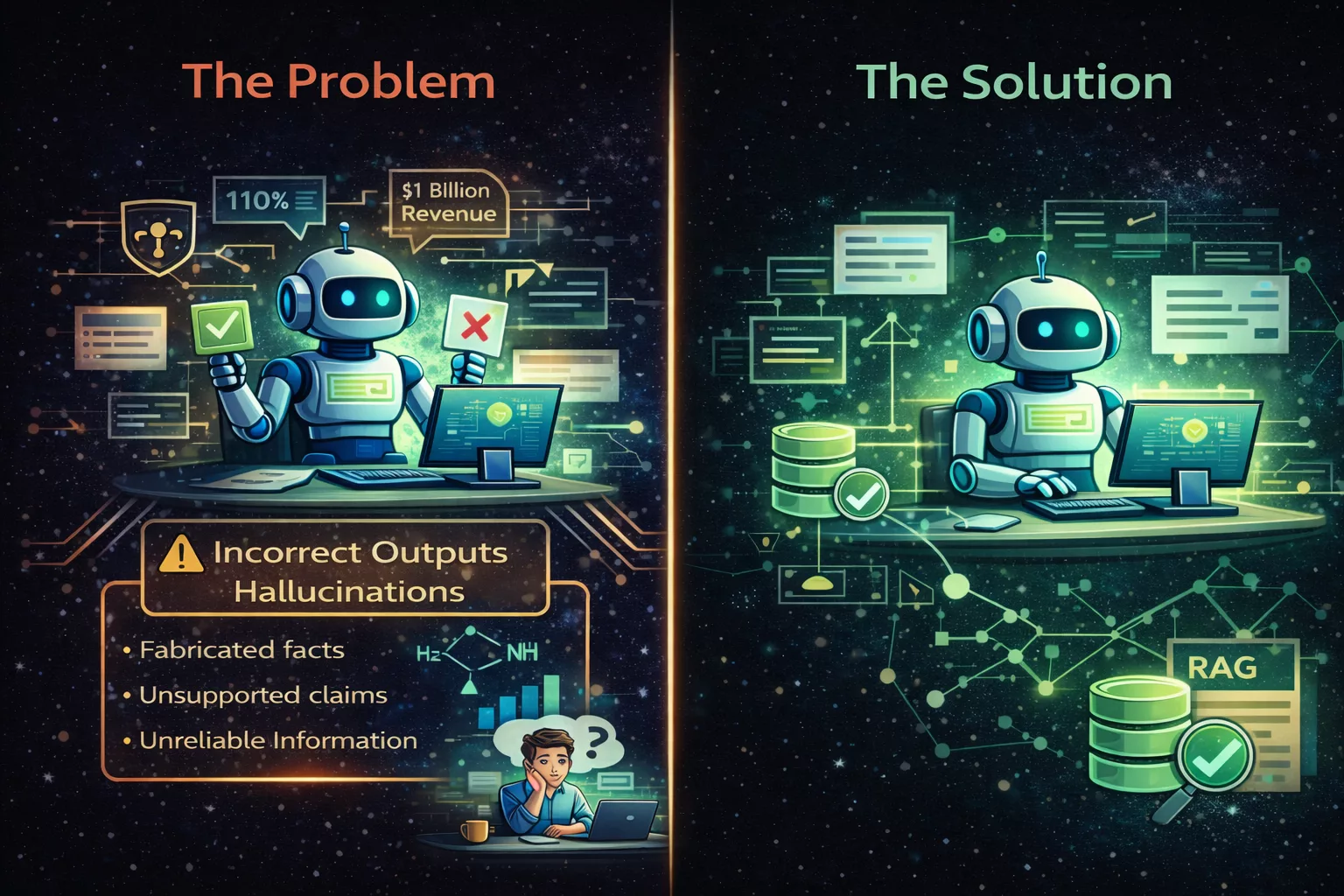

Challenge 2: Confident but Incorrect Outputs (Hallucinations)

One of the most widely discussed issues in AI agent systems is the tendency to produce responses that sound accurate and confident but are factually incorrect. This behavior is commonly referred to as hallucination. While the term may sound dramatic, the underlying issue is rooted in how language models generate text and how agents rely on those outputs during decision-making.

In agent-based systems, hallucinations are especially risky because the output may not stop at text generation. The agent may act on incorrect information, trigger tools, or influence downstream systems based on false assumptions.

What Goes Wrong

AI agents generate responses by predicting the most likely next words based on patterns learned during training. They do not verify facts unless explicitly designed to do so. As a result, when an agent encounters incomplete data, ambiguous instructions, or unfamiliar scenarios, it may fill in gaps with plausible but incorrect information.

This problem becomes more pronounced when agents are asked to explain complex topics, summarize unfamiliar documents, or answer questions beyond the scope of their available data. Because the output is linguistically fluent, it can appear reliable even when it is wrong.

At a more advanced level, hallucinations can occur when agents chain multiple steps together. An incorrect assumption early in the process can propagate through subsequent actions, leading to compounded errors that are harder to detect.

The Problem

Confident but incorrect outputs undermine trust in AI agents. Users may assume that a well-written response is also a correct one, especially when the agent has performed reliably in the past.

In operational environments, hallucinations can cause incorrect decisions, wasted effort, or compliance risks. For example, an agent may provide inaccurate technical guidance, misinterpret policy rules, or reference non-existent data. When these outputs are used to trigger actions or inform decisions, the impact extends beyond misinformation.

From a system design perspective, hallucinations are difficult to catch automatically. Unlike obvious failures, incorrect outputs often look complete and internally consistent, making them harder to flag without additional validation mechanisms.

Want to know? Agentic AI vs Traditional AI

The Solution

The most effective way to reduce hallucinations is to limit situations where agents are forced to rely solely on their internal knowledge. Instead, modern systems combine generation with retrieval and verification.

At a basic level, retrieval-based approaches allow agents to pull information from trusted external sources before generating responses. This grounds outputs in real data rather than assumptions. When sources are cited or referenced internally, the system can also evaluate whether the response is supported by evidence.

At a more advanced level, agents use validation steps such as cross-checking outputs, applying rule-based constraints, or involving secondary models to review responses. Some systems also include explicit uncertainty handling, allowing agents to acknowledge when they do not have sufficient information instead of generating speculative answers.

Importantly, hallucination mitigation is not a single feature. It is a combination of prompt design, data access, workflow structure, and monitoring. Well-designed agent systems treat verification as part of the task, not an optional add-on.

Why This Challenge Matters

Hallucinations are not just a model-level issue; they are a system-level risk. As AI agents take on more responsibility, the cost of incorrect outputs increases. A single confident mistake can ripple through workflows, affect user trust, and create downstream errors that are costly to resolve.

Understanding and addressing this challenge is essential for organizations that rely on agents for research, analysis, or operational decision-making. Reducing hallucinations improves reliability, transparency, and user confidence, making AI agents safer and more effective in real-world use.

Read once! Agentic AI vs Autonomous AI: Key Differences

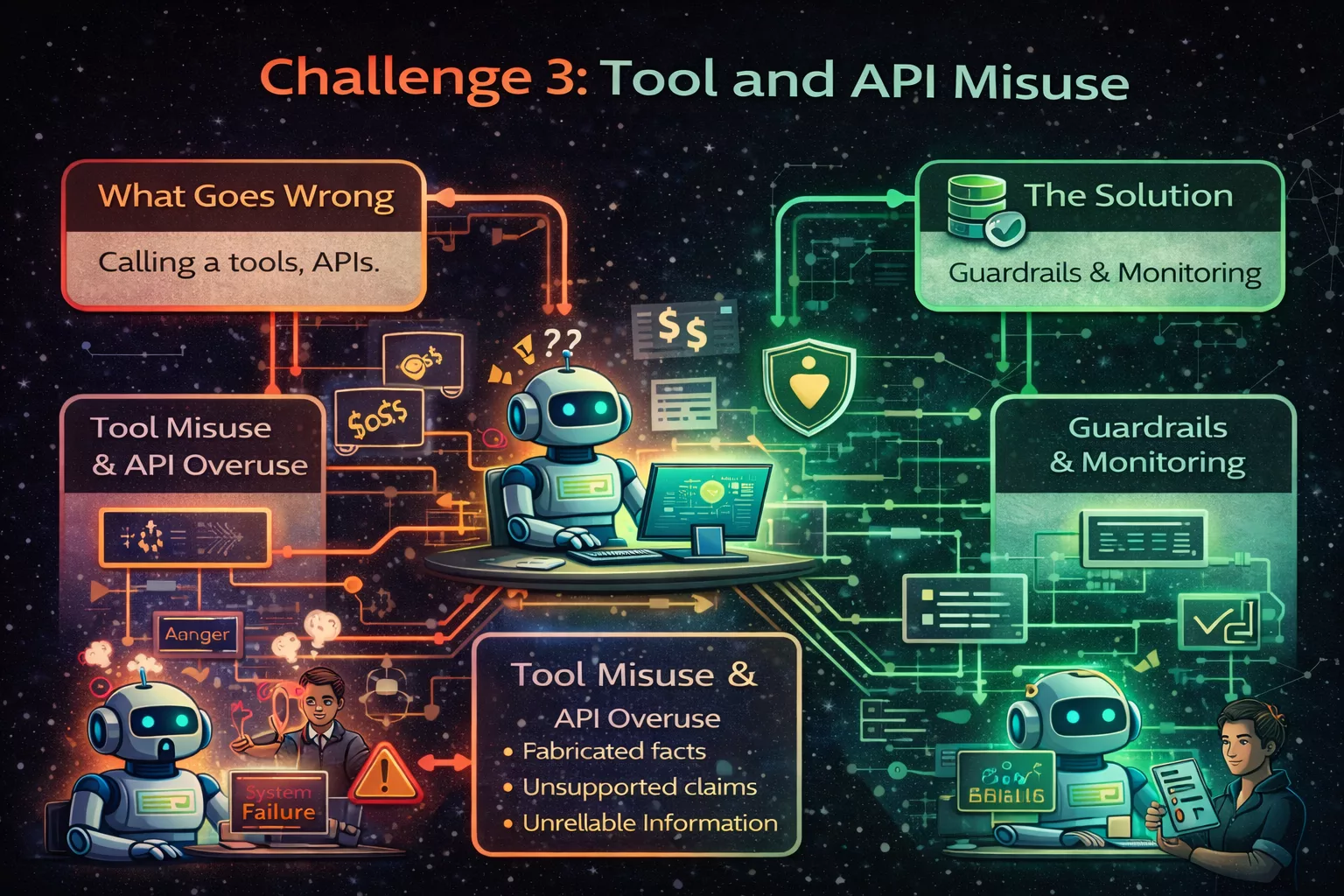

Challenge 3: Tool and API Misuse

Tool and API misuse is a common challenge in AI agent systems, especially when agents are given access to multiple external services. While tools extend what an agent can do, they also introduce complexity and risk if not managed carefully.

This challenge does not stem from malicious behavior. It arises from how agents interpret goals, decide on actions, and attempt to complete tasks using the tools available to them.

What Goes Wrong

AI agents may call tools when they are not needed, repeat the same API calls multiple times, or use incorrect parameters when interacting with external systems. In some cases, agents attempt to solve problems by invoking tools even when a direct response would be sufficient.

At a basic level, this happens because agents are optimized to “take action” toward a goal. If a tool is available, the agent may treat it as a default step rather than evaluating whether it adds value.

At a more advanced level, misuse can occur when agents operate across multiple tools with overlapping functionality or when tool descriptions are vague. Without clear guidance, the agent may struggle to choose the correct tool or sequence of actions.

The Problem

Unnecessary or incorrect tool usage has several practical consequences. It increases operational costs due to extra API calls and compute usage. It can also slow down workflows, as each tool invocation adds latency.

In more serious cases, misuse leads to failures in downstream systems. Incorrect API calls may trigger errors, consume rate limits, or produce unintended side effects. In regulated environments, misuse can also create compliance or security concerns, especially when tools access sensitive data.

From a system management perspective, excessive tool use makes agent behavior harder to predict and debug. Logs become noisy, and it becomes unclear which actions were essential and which were redundant.

The Solution

Addressing tool and API misuse requires a combination of design discipline and runtime controls.

At a basic level, tools should be clearly defined with precise descriptions of when they should and should not be used. Narrowing the scope of each tool reduces ambiguity and improves decision quality.

At a more advanced level, systems implement guardrails that evaluate tool calls before execution. These guardrails may check parameters, enforce rate limits, or require confirmation that a tool is appropriate for the current step. Execution monitoring systems track tool usage patterns and flag abnormal behavior.

In mature agent systems, tool usage is treated as a controlled capability rather than an open-ended option. The agent is guided to reason about whether a tool is necessary, what outcome it should produce, and how that outcome affects the overall goal.

Tool misuse directly affects cost, reliability, and trust in AI agent systems. As agents gain access to more powerful tools, the consequences of incorrect usage increase.

By implementing clear tool boundaries and monitoring mechanisms, organizations can ensure that agents act efficiently and responsibly. Managing this challenge effectively allows AI agents to scale while maintaining predictable behavior and controlled risk.

Read once! How Agentic AI Is Changing Software Development

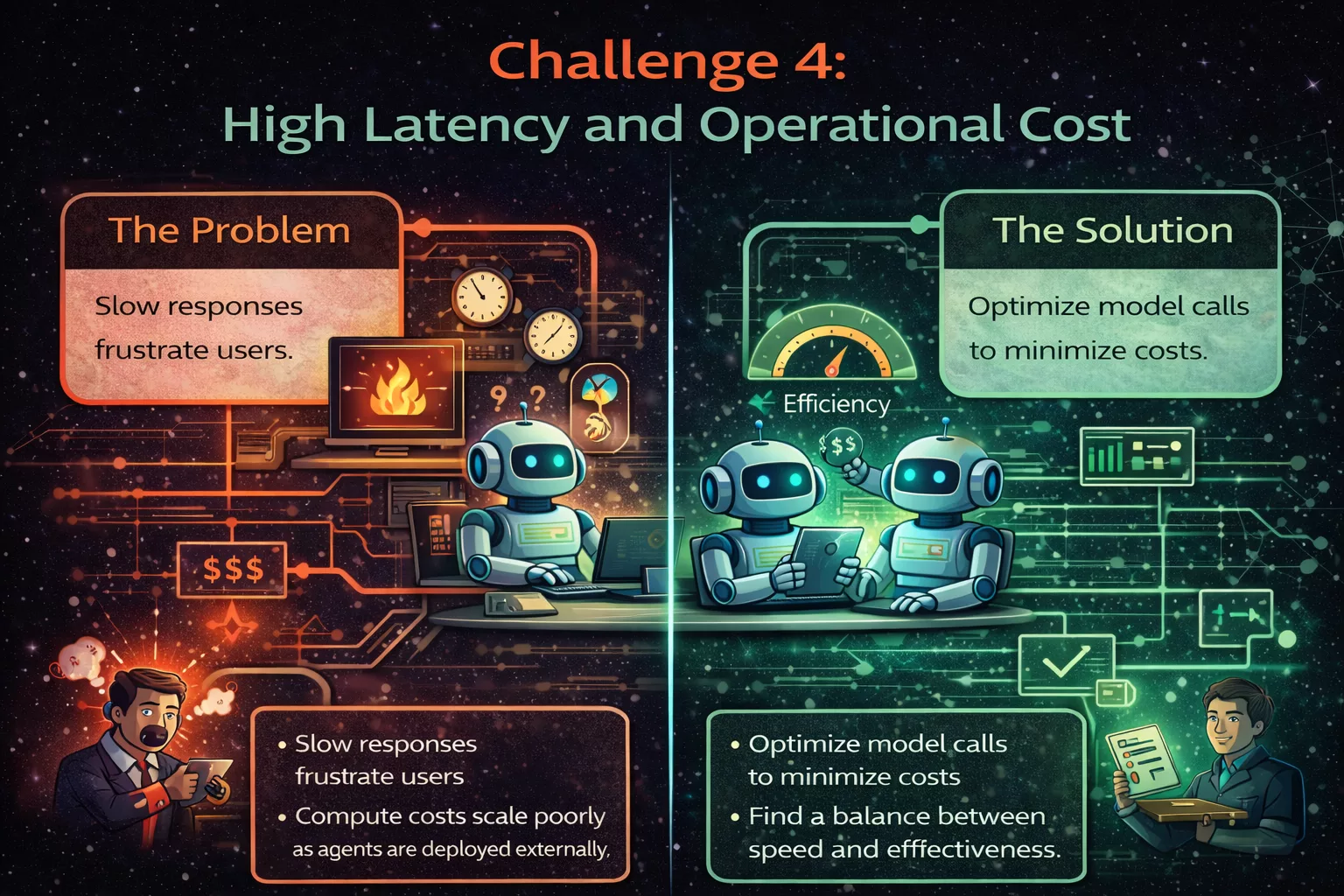

Challenge 4: High Latency and Operational Cost

High latency and rising operational costs are among the most practical challenges organizations face when deploying AI agents at scale. While AI agents are designed to handle complex, multi-step tasks, the way they interact with models, tools, and external systems can introduce delays and significantly increase infrastructure expenses.

This challenge becomes especially visible in production environments, where performance expectations and cost controls are strict.

What Goes Wrong

AI agents often rely on multiple model calls, tool invocations, and external API interactions to complete a single task. Each of these steps adds processing time and consumes compute resources.

At a basic level, latency increases because agents do not simply generate one response. They plan, evaluate options, call tools, check results, and sometimes repeat these steps. In multi-agent systems, coordination between agents adds further overhead.

At a more advanced level, inefficiencies appear when agents make unnecessary calls, fail to reuse previous results, or operate without clear limits on how long they can reason or retry actions. Over time, these behaviors compound and lead to slow system responses.

The Problem

High latency directly affects user experience. Slow responses reduce trust in the system and limit where agents can be used, especially in time-sensitive workflows such as operations monitoring or customer support.

Operational cost is the other side of the same issue. Each model call consumes compute resources, and frequent calls quickly increase usage costs. When multiple agents operate simultaneously, costs can grow faster than expected, making large-scale deployment financially unsustainable.

From a business perspective, this creates uncertainty. Teams may struggle to predict expenses or justify expanding agent-based systems beyond pilot projects.

The Solution

Reducing latency and controlling cost requires treating AI agents as performance-sensitive systems rather than simple model wrappers.

At a basic level, caching is one of the most effective techniques. When agents encounter similar queries or repeated steps, cached responses prevent redundant model calls. This immediately reduces both response time and cost.

At a more advanced level, batching and request optimization are used. Instead of making separate model calls for each small step, systems combine related operations into fewer requests. Some architectures also limit how often agents can re-plan or retry actions unless conditions clearly change.

Well-designed systems also monitor agent behavior closely. By tracking which actions contribute the most to latency and cost, teams can refine workflows, simplify reasoning steps, and remove unnecessary operations.

In mature deployments, performance budgets are enforced. Agents are given explicit limits on time, number of calls, or cost per task, ensuring predictable behavior even under heavy load.

Why This Challenge Matters

High latency and operational cost are not theoretical concerns. They directly determine whether AI agents can move from experimental tools to reliable production systems.

If left unmanaged, these issues can prevent adoption, strain budgets, and reduce confidence in agent-based automation. Addressing them early allows organizations to scale AI agents responsibly while maintaining performance and cost control.

Read once! Top Reasons Why You Should Hire The Best Agentic AI Companies

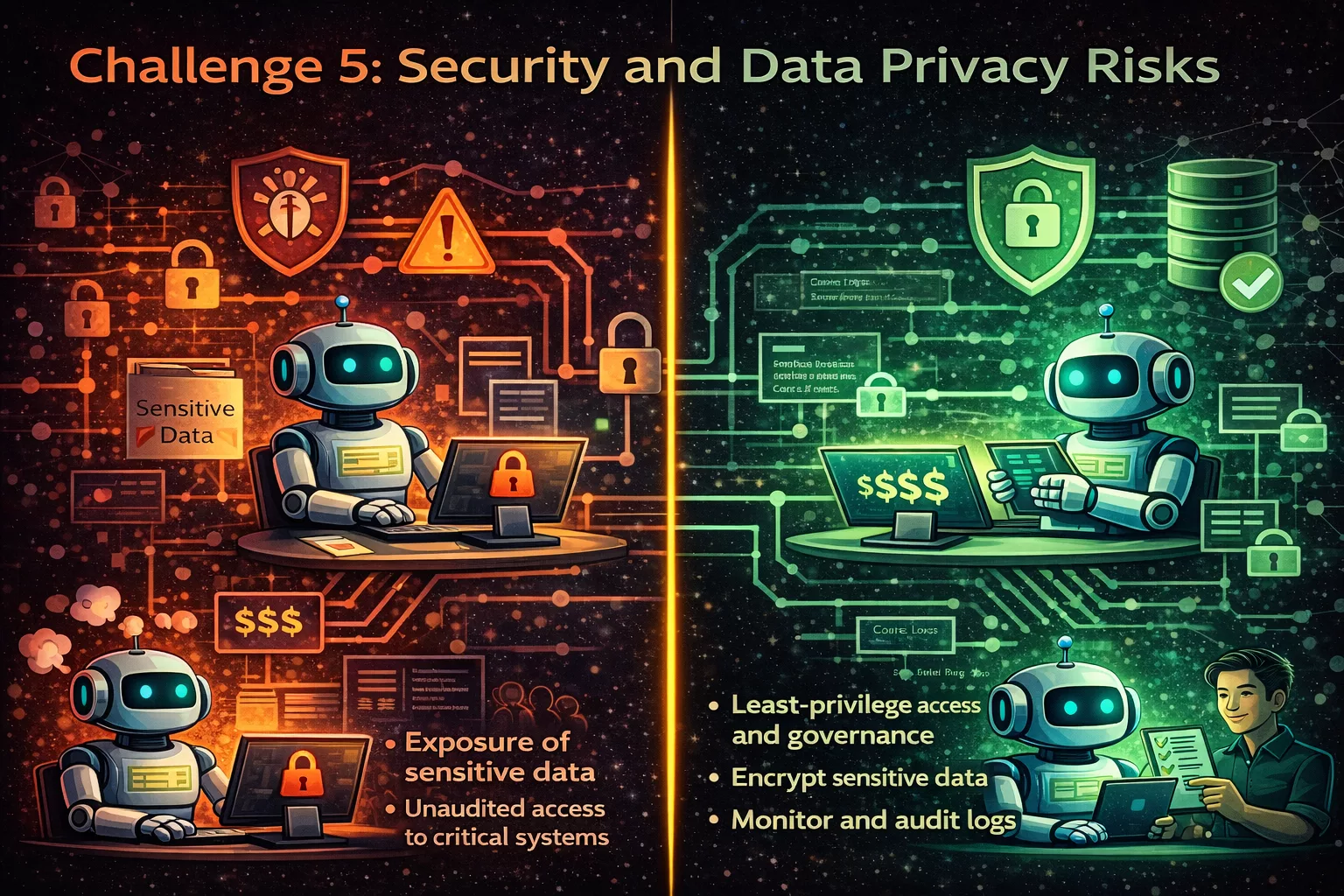

Challenge 5: Security and Data Privacy Risks

Security and data privacy risks are among the most critical challenges in AI agent systems. Unlike traditional AI models that operate within limited boundaries, AI agents often interact with multiple systems, access sensitive data, and make decisions across environments. This broader access significantly increases the potential impact of security failures.

As AI agents become more autonomous and interconnected, protecting data and controlling access is no longer optional. It is a foundational requirement.

What Goes Wrong

AI agents frequently connect to external APIs, internal databases, cloud services, and third-party tools. In doing so, they may handle credentials, personal data, proprietary information, or operational details.

Security risks arise when agents are given overly broad permissions, poorly scoped access, or insufficient monitoring. In some cases, an agent may unintentionally expose sensitive data through logs, outputs, or external tool calls. In others, misconfigured integrations can allow unauthorized access or data leakage.

At a more advanced level, risks also emerge from prompt injection, tool manipulation, or indirect data exposure. If an agent is influenced by untrusted inputs, it may reveal information or perform actions outside its intended scope.

The Problem

When security controls are weak, the consequences can be severe. Sensitive customer data may be exposed, internal systems may be compromised, and regulatory compliance may be violated. These risks are amplified because AI agents can act quickly and at scale.

Another key issue is traceability. Without proper logging and audit mechanisms, it becomes difficult to determine what data an agent accessed, what actions it took, and why a security incident occurred. This lack of visibility increases recovery time and complicates accountability.

From an organizational perspective, security concerns often slow down AI adoption. Teams may limit agent capabilities or avoid deployment altogether due to uncertainty around data protection and risk management.

The Solution

Mitigating security and data privacy risks requires a layered approach that combines technical controls, system design, and governance practices.

At a basic level, agents should operate under the principle of least privilege. This means granting access only to the data and tools necessary for a specific task, and nothing more. Credentials should be scoped, time-limited, and rotated regularly.

At a more advanced level, systems implement access controls, data masking, and encryption to protect sensitive information both at rest and in transit. Audit logs track every action an agent takes, enabling teams to review behavior and investigate incidents.

Additional safeguards include input validation, output filtering, and monitoring for unusual activity. These measures help detect and prevent misuse before it escalates into a larger issue.

Well-designed systems treat security as part of the agent’s architecture, not as an external add-on. Security boundaries are clearly defined, enforced, and continuously monitored.

Why This Challenge Matters

Security and data privacy risks directly affect trust. If users or organizations cannot trust that an AI agent will handle data responsibly, adoption will stall regardless of the system’s capabilities.

As AI agents are increasingly used in regulated environments such as finance, healthcare, and enterprise operations, strong security practices become essential for compliance and long-term viability. Addressing this challenge early allows organizations to scale agent systems with confidence rather than caution.

Looking for? Best Agentic Automation Solution providers

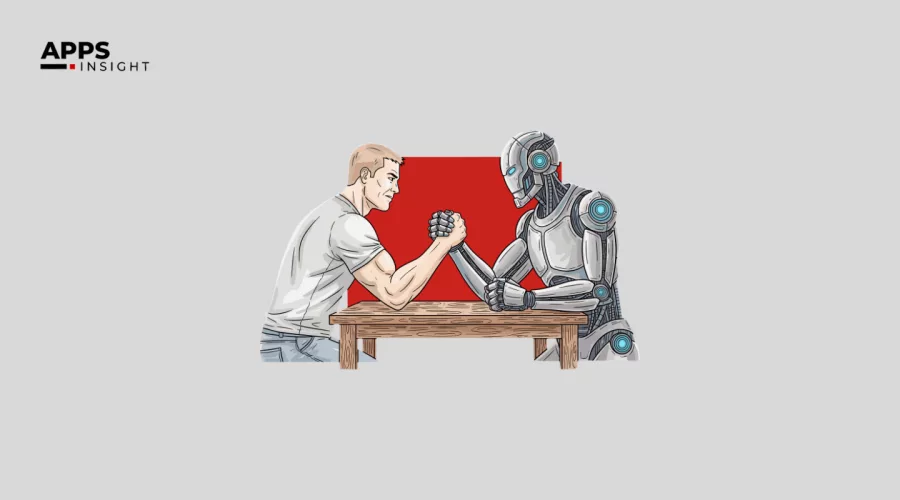

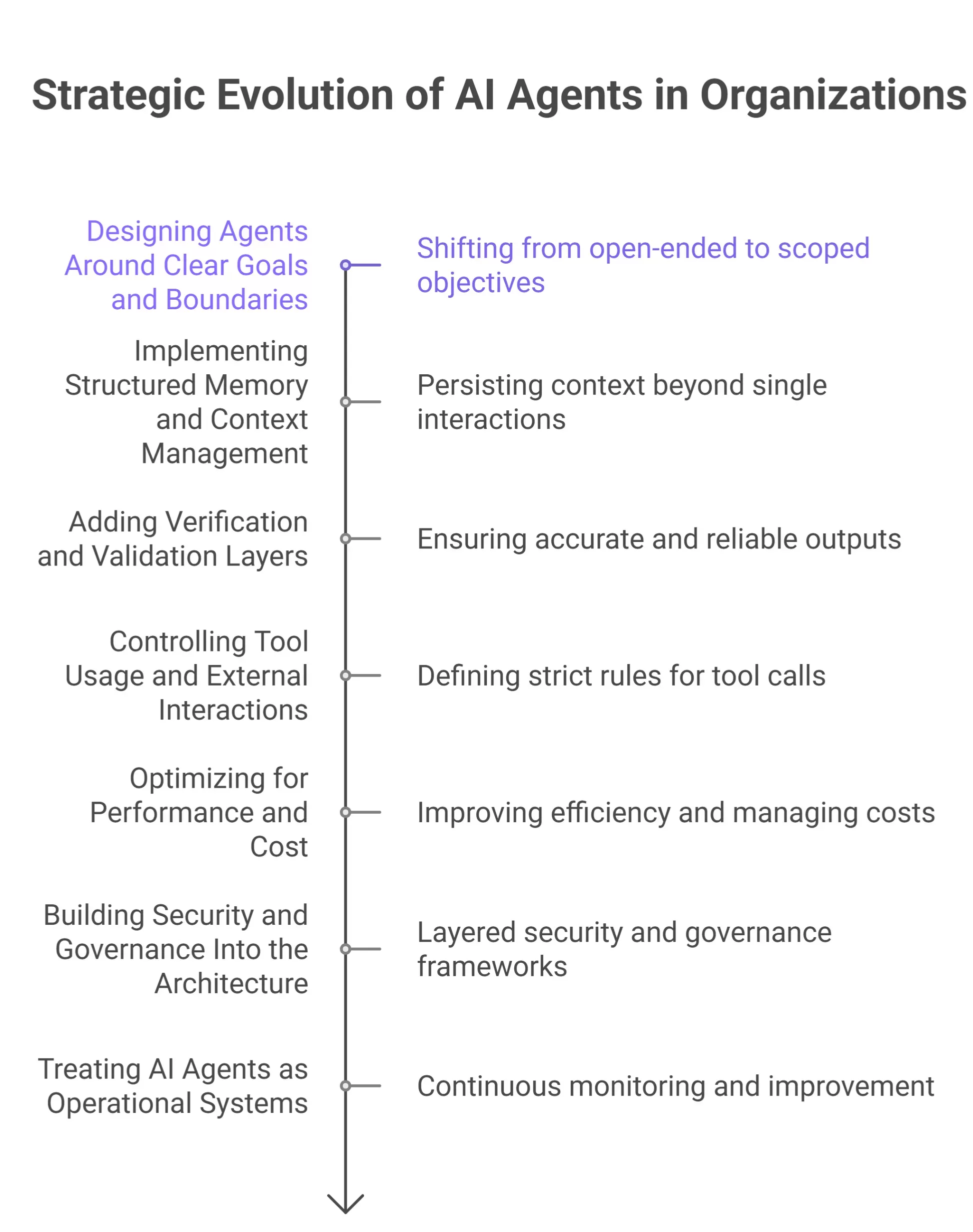

How Organizations Are Addressing These Challenges

Organizations deploying AI agents are learning that success depends less on model capability and more on system design, governance, and operational discipline. Rather than trying to eliminate every limitation, they focus on building structures that reduce risk, improve reliability, and make agent behavior easier to control and understand.

Below are the key ways organizations are addressing AI agent challenges in practice.

Designing Agents Around Clear Goals and Boundaries

One of the first changes organizations make is shifting from open-ended agent behavior to clearly scoped objectives. Instead of allowing agents to act freely, teams define explicit goals, constraints, and stopping conditions.

This approach limits unintended actions and makes agent behavior more predictable. It also simplifies evaluation, since success can be measured against predefined outcomes rather than subjective expectations.

At a more advanced level, organizations separate strategic goals from execution logic. Humans define what the agent should achieve, while the system controls how it is allowed to act.

Implementing Structured Memory and Context Management

To address context loss, organizations introduce structured memory layers that persist beyond a single interaction. These systems store important decisions, assumptions, and task states in a retrievable format.

Rather than feeding entire histories back into the model, agents retrieve only the most relevant context when needed. This reduces confusion, improves consistency, and lowers computational cost.

In mature deployments, memory policies define what information must be saved, when it should be updated, and how long it should be retained.

Adding Verification and Validation Layers

Organizations reduce incorrect or misleading outputs by introducing verification steps into agent workflows. Instead of trusting a single response, systems validate outputs against trusted data sources or secondary checks.

This may include retrieval-based grounding, rule-based validation, or human-in-the-loop review for high-impact decisions. The goal is not to slow the system down, but to prevent confident errors from propagating into downstream actions.

Over time, these validation layers become a standard part of production-grade agent systems.

Controlling Tool Usage and External Interactions

To prevent tool misuse and unnecessary actions, organizations define strict rules around when and how agents can call external tools or APIs.

This includes limiting permissions, enforcing usage thresholds, and monitoring execution patterns. If an agent repeatedly calls the same tool or behaves unexpectedly, the system can intervene automatically.

Advanced setups include execution monitoring that evaluates whether a tool call was necessary and aligned with the current goal.

Optimizing for Performance and Cost

As agent systems scale, organizations invest in performance optimization. This includes reducing redundant model calls, caching frequently used results, and batching tasks where possible.

Rather than increasing compute resources indefinitely, teams focus on smarter orchestration. These optimizations improve responsiveness and keep operational costs manageable, especially in multi-agent environments.

Building Security and Governance Into the Architecture

Security is addressed through layered controls rather than single safeguards. Organizations apply least-privilege access, encrypt sensitive data, and maintain detailed audit logs of agent activity.

Governance frameworks define who can configure agents, what data they can access, and how incidents are handled. This is especially important in regulated industries, where compliance and traceability are mandatory.

By embedding security into the system architecture, organizations reduce risk without limiting agent usefulness.

Read once! Build an Agentic AI Personal Assistant with the OpenAI API: Step-by-Step

Treating AI Agents as Operational Systems

A key mindset shift is treating AI agents as long-running operational systems rather than experimental tools. This means continuous monitoring, regular evaluation, and ongoing improvement.

Organizations track agent behavior over time, identify failure patterns, and adjust system design accordingly. This operational approach helps teams scale agent deployments with confidence instead of reacting to problems after they occur.

Organizations that succeed with AI agents do not aim for full autonomy immediately. They build controlled systems that balance automation with oversight, flexibility with safety.

By addressing challenges through design, monitoring, and governance, they turn AI agents into reliable components of larger workflows rather than unpredictable black boxes.

Looking for? Best AI Tools For 2026?

Final Takeaway

AI agents represent a significant step toward more autonomous systems, but they come with clear limitations. Context loss, hallucinations, tool misuse, cost, and security risks are not edge cases; they are common challenges that must be actively managed.

By applying proven solutions and treating agents as part of a larger system, organizations can use them effectively without overestimating their capabilities. For deeper insights, technical breakdowns, and industry-focused analysis, readers can explore more related articles on AppsInsight, where AI systems are discussed with a practical, implementation-first perspective.